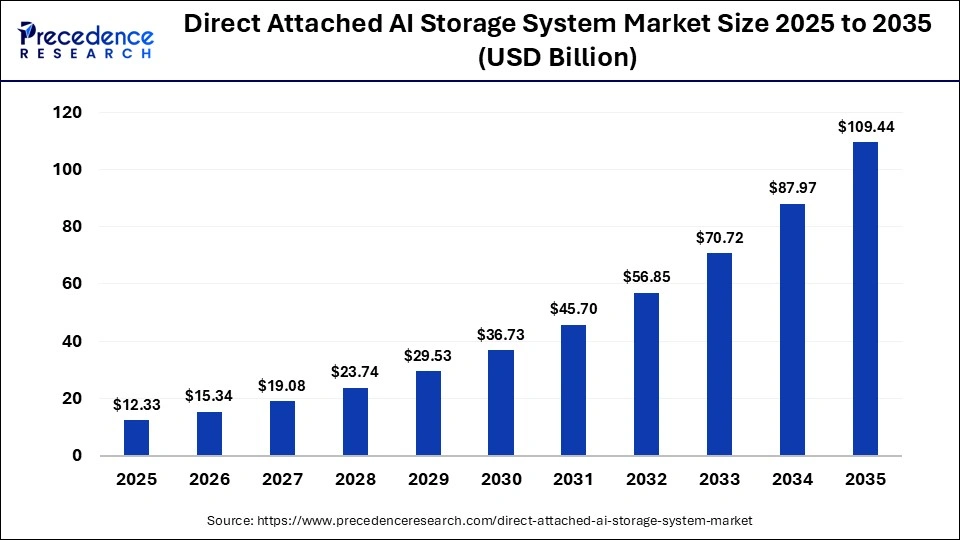

The global direct attached AI storage system market is growing rapidly as organizations seek ultra‑low‑latency, high‑throughput storage to power AI training and inference workloads. In 2025, the market was valued at USD 12.33 billion, and it is projected to expand from USD 15.34 billion in 2026 to around USD 109.44 billion by 2035, growing at a CAGR of 14.40% from 2026 to 2035.

This article explains what direct attached AI storage systems are, key growth drivers, market segments, and regional outlook for this high‑performance storage segment.

Read Also: Lined Valve Market

What Are Direct Attached AI Storage Systems?

Direct attached AI storage systems are storage devices that connect directly to a host (such as a GPU server or AI‑accelerated workstation) without going through a network, enabling ultra‑low latency and high‑throughput data access. These systems are typically used as DAS‑based storage for AI workloads where GPU utilization is sensitive to data bottlenecks.

Common features include:

-

NVMe SSD‑based all‑flash storage for fast I/O and reduced latency.

-

High capacity (often 5 TB and above) to handle large training datasets and real‑time streaming data.

-

Tight integration with GPUs and AI accelerators to minimize data‑transfer delays and maximize compute efficiency.

Industries such as cloud AI providers, autonomous driving, healthcare analytics, and financial services increasingly rely on direct attached AI storage to accelerate training and inference pipelines.

Market Size and Growth (2025–2035)

The direct attached AI storage system market is expanding at a double‑digit pace, driven by the explosion of AI‑driven data and the need for real‑time analytics.

Key figures:

-

Market size in 2025: USD 12.33 billion

-

Market size in 2026 (predicted): USD 15.34 billion

-

Market size by 2035 (projected): USD 109.44 billion

-

CAGR from 2026 to 2035: 14.40%

Alternative reports on direct attached AI storage / AI‑powered storage show similar strong growth trajectories, with some studies projecting CAGRs of 18–25% over different time windows, reflecting robust long‑term demand for AI‑focused storage architectures.

What Is Driving Market Growth?

Several interconnected factors are accelerating adoption of direct attached AI storage systems.

1. Demand for Ultra‑Low‑Latency and High‑Throughput Storage

AI training and inference workloads require constant data feeding to GPUs to avoid “GPU starvation,” where compute cores sit idle waiting for data. Direct attached storage reduces network hops and latency, enabling continuous data flow and higher GPU utilization.

2. Rise of AI and Data‑Intensive Workloads

Surging use of machine learning, deep learning, and large‑language models (LLMs) generates massive volumes of structured and unstructured data. Direct attached AI storage systems help manage these datasets efficiently while supporting real‑time analytics and inference.

3. Shift to All‑Flash and NVMe‑Based Architectures

NVMe SSDs and all‑flash arrays are replacing traditional HDDs in AI‑focused storage, delivering faster read/write speeds and lower latency. This shift is critical for applications such as real‑time video analytics, autonomous driving, and genomic analysis, where milliseconds matter.

4. Edge AI and Localized Data Processing

Edge AI deployments in industrial IoT, smart cities, and autonomous vehicles require local, high‑performance storage to process data without relying on distant data centers. Direct attached AI storage systems are well‑suited for edge servers and workstations that combine AI inference with on‑device storage.

5. Data Sovereignty and Security Requirements

Growing emphasis on data residency, privacy, and compliance pushes organizations to keep sensitive AI‑training data on‑premises or in tightly controlled environments, rather than constantly moving it over networks. Direct attached storage supports this by keeping data close to the compute platform while minimizing exposure to network‑based vulnerabilities.

Key Market Segments

The market is segmented by capacity, storage type, end user, application, and region.

By Capacity (2025 Snapshot)

-

5 TB to 20 TB: Held about 30.5% of market share in 2025, favored by small and medium enterprises that need moderate capacity without overspending on ultra‑large systems.

-

20 TB to 50 TB: Dominates in enterprise‑grade AI workloads requiring large datasets for healthcare, finance, and advanced analytics.

-

Above 50 TB and below 5 TB: Cater to very large‑scale data centers and light‑weight edge or workstation setups, respectively.

By Storage Type

-

Solid State Drive (SSD)‑based systems (especially NVMe) lead due to their high speed, low latency, and reliability for AI and ML workloads.

-

Hybrid systems (HDD + SSD caching) are used mainly for cost‑sensitive or archive‑heavy environments where not all data is AI‑critical.

By End User

-

Large enterprises: Deploy direct attached AI storage in data centers, cloud platforms, and on‑prem HPC clusters.

-

Small and medium enterprises (SMEs): Adopt compact, cost‑efficient direct attached systems for AI‑enabled analytics, edge inference, and lab‑scale training.

-

Government and research organizations: Use them in supercomputing and scientific AI projects, where data‑intensive simulations and models are common.

By Application

-

AI and machine learning (including deep learning and LLMs).

-

Data analytics and real‑time decision‑making in finance, healthcare, and logistics.

-

Big data and high‑performance computing (HPC) environments.

Regional Outlook

The market is analyzed across North America, Europe, Asia‑Pacific, Latin America, and the Middle East & Africa.

North America Leads the Market

-

North America held the largest market share (around 35% in 2025) and is expected to remain the dominant region through 2035.

-

The region is home to major cloud providers, AI startups, and large‑scale data centers that rely heavily on direct attached AI storage for GPU‑driven workloads.

Key drivers in North America include:

-

Heavy investment in AI research, cloud infrastructure, and hyperscale data centers.

-

Strong adoption of NVMe SSDs and all‑flash architectures for AI and real‑time analytics.

Asia‑Pacific: Fastest‑Growing Region

-

The Asia‑Pacific region is projected to see significant share growth, expanding from about 20% in 2025 to 24% by 2035, indicating strong momentum.

-

Countries such as China, Japan, South Korea, and India are scaling up AI research, cloud, and edge computing infrastructure, all of which consume large‑scale, high‑performance storage.

Growth in Asia‑Pacific is supported by:

-

Rising government and private‑sector investments in AI, smart cities, and digital transformation.

-

Expansion of data centers and hyperscalers in major APAC markets.

Europe: Steady AI‑Storage Growth

-

Europe is expected to grow steadily, driven by enterprise AI adoption, stricter data‑protection regulations (such as GDPR), and investment in HPC and cloud platforms.

-

European organizations are increasingly adopting direct attached AI storage to ensure low‑latency data access while complying with data‑sovereignty and privacy rules.

Major Players and Industry Developments

The direct attached AI storage system market includes a mix of established storage vendors, AI‑infrastructure providers, and cloud‑native hardware companies.

Key Companies (Examples)

While the exact list varies by source, typical players include:

-

Hyperscale cloud and AI‑infrastructure providers building custom‑storage platforms for AI workloads.

-

Enterprise storage vendors offering NVMe‑based DAS and AI‑ready storage enclosures for on‑prem and colocation deployments.

-

Edge‑focused hardware manufacturers delivering compact, direct‑attached AI storage boxes for edge AI and IoT gateways.

Recent Industry Developments

-

Increasing adoption of NVMe over Fabrics (NVMe‑oF) and high‑speed interfaces to connect direct storage to GPUs and accelerators, minimizing latency.

-

AI‑driven storage management software that optimizes data placement, tiering, and caching for AI workloads, improving efficiency and reducing storage costs.

-

New DAS‑style product lines targeting AI workstations and edge AI servers, combining high‑capacity NVMe storage with compact form factors.

Conclusion

The direct attached AI storage system market is entering a high‑growth, high‑innovation phase, fueled by the need for ultra‑low‑latency, high‑throughput storage to keep GPUs and AI accelerators fully fed with data. With North America leading today and Asia‑Pacific gaining share rapidly, this market is likely to remain a core enabler of AI infrastructure through 2035.

If you share your target audience (e.g., IT managers, data‑center operators, or AI developers), I can tailor this article with SEO‑optimized headings, meta description, and keyword suggestions for your WordPress blog.

Get Sample: https://www.precedenceresearch.com/sample/8270

For inquiries regarding discounts, bulk purchases, or customization requests, please contact us at sales@precedenceresearch.com