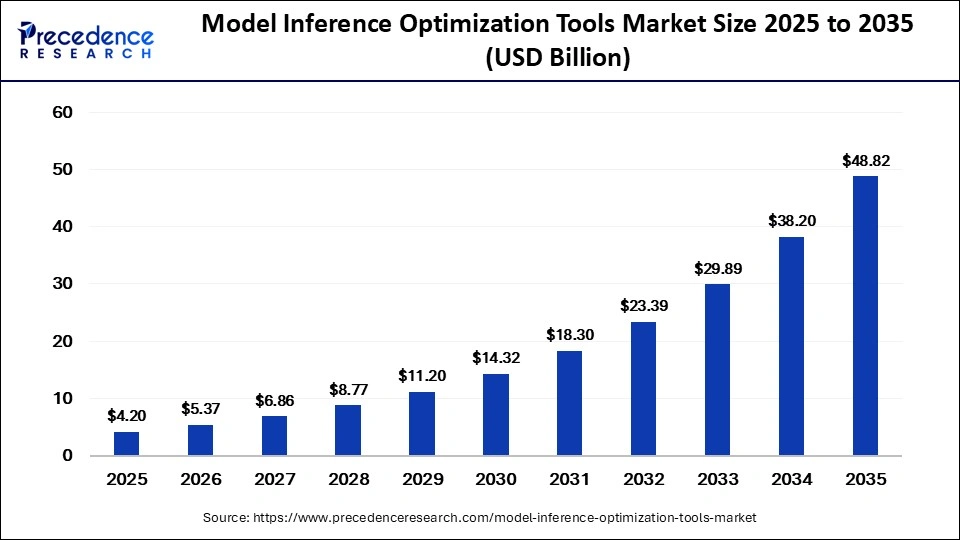

The global model inference optimization tools market size was calculated at USD 4.20 billion in 2025 and is predicted to increase from USD 5.37 billion in 2026 to approximately USD 48.82 billion by 2035, expanding at a CAGR of 27.80% from 2026 to 2035.

Model Inference Optimization Tools Market: Accelerating the Future of Efficient AI Deployment

Introduction: Why AI Inference Optimization Matters

Artificial intelligence models are becoming increasingly powerful, but deploying them efficiently at scale remains one of the biggest challenges for enterprises. As organizations integrate AI into real-time applications such as autonomous systems, generative AI, healthcare diagnostics, cybersecurity, and financial analytics, the need for faster and more cost-efficient AI inference has become critical.

This growing demand has fueled the rapid expansion of the model inference optimization tools market, a sector focused on improving the speed, efficiency, scalability, and energy consumption of AI models during deployment and inference.

Inference optimization tools help organizations reduce latency, lower infrastructure costs, improve throughput, and maximize hardware utilization, enabling AI systems to perform efficiently across cloud, edge, and on-device environments.

Read Also: Data Center Cable Market

Market Overview: Rapid Growth Fueled by AI Adoption

The rapid growth of this market is being driven by:

- Increasing deployment of generative AI and large language models (LLMs)

- Rising demand for low-latency AI applications

- Expansion of edge AI and real-time analytics

- Growing need to reduce AI infrastructure costs

- Increasing adoption of AI accelerators and GPUs

As AI models become larger and more computationally intensive, optimization technologies are becoming essential for enterprise-scale deployment.

What Are Model Inference Optimization Tools?

Model inference optimization tools are software solutions designed to improve the efficiency of AI model execution after training.

These tools optimize how AI models perform in production environments by improving:

- Inference speed

- Memory utilization

- Power efficiency

- Throughput

- Hardware compatibility

They are widely used across:

- Cloud data centers

- Edge devices

- Smartphones

- Autonomous systems

- Industrial IoT environments

Inference optimization is especially important for applications requiring real-time decision-making and low operational costs.

Key Market Trends

1. Explosion of Generative AI and Large Language Models

The rapid adoption of generative AI platforms and large language models is significantly increasing demand for inference optimization solutions.

LLMs require substantial computational resources during inference, especially when serving millions of users simultaneously. Optimization tools help reduce:

- Response latency

- GPU utilization costs

- Memory requirements

As enterprises increasingly deploy generative AI applications, inference optimization has become a critical operational priority.

2. Growth of Edge AI Deployment

Edge AI applications are expanding rapidly across industries such as:

- Automotive

- Healthcare

- Manufacturing

- Retail

- Telecommunications

Edge devices often operate with limited computing power and energy resources, making optimization essential.

Optimization tools enable AI models to run efficiently on:

- Mobile devices

- IoT systems

- Embedded hardware

- Industrial sensors

The edge AI segment is expected to remain one of the strongest drivers of market growth over the next decade.

3. Rising Demand for Quantization and Compression Technologies

Model compression technologies are becoming increasingly important for reducing computational overhead.

Popular Optimization Techniques Include:

- Quantization

- Pruning

- Tensor optimization

- Graph optimization

- Distillation

These methods significantly improve performance while maintaining model accuracy.

Quantization tools accounted for a substantial portion of market adoption in 2025 due to their ability to reduce inference costs and improve efficiency.

4. Hardware-Aware AI Optimization

AI optimization tools are increasingly designed to work closely with specialized hardware such as:

- GPUs

- TPUs

- NPUs

- AI accelerators

- FPGA-based systems

Hardware-aware optimization enables organizations to maximize the performance of advanced AI infrastructure.

This trend is becoming especially important as enterprises invest heavily in AI compute ecosystems.

5. AI Infrastructure Cost Reduction Becoming a Strategic Priority

The operational cost of deploying AI models at scale is becoming a major concern for enterprises.

Inference optimization tools help reduce:

- Cloud computing expenses

- Energy consumption

- GPU infrastructure requirements

Organizations are increasingly focusing on optimization to improve the economic sustainability of AI deployments.

Market Dynamics

Market Drivers

Increasing Enterprise AI Adoption

Enterprises across industries are rapidly integrating AI into:

- Customer service

- Fraud detection

- Predictive analytics

- Recommendation systems

- Industrial automation

This widespread adoption is driving demand for scalable inference optimization solutions.

Growing Need for Real-Time AI Processing

Applications such as:

- Autonomous vehicles

- Video analytics

- Medical diagnostics

- Financial trading systems

require ultra-low-latency AI processing, increasing the need for optimization tools.

Expansion of AI Cloud Infrastructure

Cloud providers are increasingly offering AI inference services at scale.

Optimization technologies are helping improve:

- Resource utilization

- Infrastructure scalability

- Service performance

Advancements in AI Chips and Accelerators

The rapid development of AI-specific hardware is creating new opportunities for optimization software vendors.

Optimization platforms that support heterogeneous computing environments are becoming highly valuable.

Market Challenges

Complexity of AI Model Architectures

Modern AI models are becoming increasingly complex, making optimization more technically challenging.

Organizations often struggle with:

- Multi-model deployment

- Cross-platform compatibility

- Hardware-specific tuning

Balancing Performance and Accuracy

Aggressive optimization techniques can sometimes reduce model accuracy.

Maintaining optimal performance without compromising reliability remains a major industry challenge.

Shortage of Skilled AI Infrastructure Professionals

Deploying and optimizing AI models at scale requires highly specialized expertise, which remains in limited supply globally.

Regional Insights

North America – Dominant Region

North America accounted for the largest market share in 2025 due to:

- Strong AI ecosystem

- Presence of major cloud providers

- Advanced semiconductor industry

- High enterprise AI adoption

The United States remains the global leader in AI infrastructure and optimization technologies.

Asia Pacific – Fastest Growing Region

Asia Pacific is projected to experience the fastest CAGR during the forecast period.

Growth Drivers Include:

- Rapid digital transformation

- Expansion of AI startups

- Increasing cloud infrastructure investments

- Government AI initiatives

Countries such as China, India, Japan, and South Korea are driving regional growth.

Europe

Europe is witnessing steady market expansion driven by:

- AI regulation frameworks

- Enterprise digital transformation

- Increasing adoption of industrial AI applications

Competitive Landscape

The model inference optimization tools market is becoming highly competitive as software providers, cloud vendors, and semiconductor companies expand their AI infrastructure capabilities.

Companies are increasingly focusing on:

- Hardware-software integration

- Open-source AI optimization frameworks

- Low-latency AI deployment solutions

- Edge AI optimization technologies

Strategic partnerships between AI software vendors and chip manufacturers are becoming increasingly common.

Future Outlook: Toward Efficient and Scalable AI

The future of AI deployment will heavily depend on inference optimization technologies.

Key Future Trends

- AI-native optimization platforms

- Autonomous AI infrastructure management

- Real-time edge inference optimization

- Energy-efficient AI deployment

- Multi-cloud AI orchestration

- Optimization for multimodal AI models

As AI models continue to grow in complexity and scale, optimization tools will become essential infrastructure components for sustainable AI adoption.

Conclusion

The model inference optimization tools market is emerging as one of the most critical segments of the AI infrastructure ecosystem. As organizations scale AI deployment across cloud, edge, and enterprise environments, optimizing inference performance is becoming a strategic necessity.

Get a Sample Copy: https://www.precedenceresearch.com/sample/8383

For inquiries regarding discounts, bulk purchases, or customization requests, please contact us at sales@precedenceresearch.com